Physicists and cosmologists are constantly postulating and testing new ideas to explain the universe and everything within. Over the last hundred years or so, two such ideas have grown to explain much about our cosmos, and do so very successfully — quantum mechanics, which describes the very small, and relativity which describes the very large. However, these two views do no reconcile, leaving theoreticians and researchers looking for a more fundamental theory of everything. One possible idea banishes the notions of time and gravity — treating them both as emergent properties of a deeper reality.

From New Scientist:

As revolutions go, its origins were haphazard. It was, according to the ringleader Max Planck, an “act of desperation”. In 1900, he proposed the idea that energy comes in discrete chunks, or quanta, simply because the smooth delineations of classical physics could not explain the spectrum of energy re-radiated by an absorbing body.

Yet rarely was a revolution so absolute. Within a decade or so, the cast-iron laws that had underpinned physics since Newton’s day were swept away. Classical certainty ceded its stewardship of reality to the probabilistic rule of quantum mechanics, even as the parallel revolution of Einstein’s relativity displaced our cherished, absolute notions of space and time. This was complete regime change.

Except for one thing. A single relict of the old order remained, one that neither Planck nor Einstein nor any of their contemporaries had the will or means to remove. The British astrophysicist Arthur Eddington summed up the situation in 1915. “If your theory is found to be against the second law of thermodynamics I can give you no hope; there is nothing for it but to collapse in deepest humiliation,” he wrote.

In this essay, I will explore the fascinating question of why, since their origins in the early 19th century, the laws of thermodynamics have proved so formidably robust. The journey traces the deep connections that were discovered in the 20th century between thermodynamics and information theory – connections that allow us to trace intimate links between thermodynamics and not only quantum theory but also, more speculatively, relativity. Ultimately, I will argue, those links show us how thermodynamics in the 21st century can guide us towards a theory that will supersede them both.

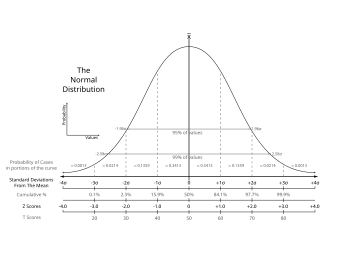

In its origins, thermodynamics is a theory about heat: how it flows and what it can be made to do (see diagram). The French engineer Sadi Carnot formulated the second law in 1824 to characterise the mundane fact that the steam engines then powering the industrial revolution could never be perfectly efficient. Some of the heat you pumped into them always flowed into the cooler environment, rather than staying in the engine to do useful work. That is an expression of a more general rule: unless you do something to stop it, heat will naturally flow from hotter places to cooler places to even up any temperature differences it finds. The same principle explains why keeping the refrigerator in your kitchen cold means pumping energy into it; only that will keep warmth from the surroundings at bay.

A few decades after Carnot, the German physicist Rudolph Clausius explained such phenomena in terms of a quantity characterising disorder that he called entropy. In this picture, the universe works on the back of processes that increase entropy – for example dissipating heat from places where it is concentrated, and therefore more ordered, to cooler areas, where it is not.

That predicts a grim fate for the universe itself. Once all heat is maximally dissipated, no useful process can happen in it any more: it dies a “heat death”. A perplexing question is raised at the other end of cosmic history, too. If nature always favours states of high entropy, how and why did the universe start in a state that seems to have been of comparatively low entropy? At present we have no answer, and later I will mention an intriguing alternative view.

Perhaps because of such undesirable consequences, the legitimacy of the second law was for a long time questioned. The charge was formulated with the most striking clarity by the British physicist James Clerk Maxwell in 1867. He was satisfied that inanimate matter presented no difficulty for the second law. In an isolated system, heat always passes from the hotter to the cooler, and a neat clump of dye molecules readily dissolves in water and disperses randomly, never the other way round. Disorder as embodied by entropy does always increase.

Maxwell’s problem was with life. Living things have “intentionality”: they deliberately do things to other things to make life easier for themselves. Conceivably, they might try to reduce the entropy of their surroundings and thereby violate the second law.

Information is power

Such a possibility is highly disturbing to physicists. Either something is a universal law or it is merely a cover for something deeper. Yet it was only in the late 1970s that Maxwell’s entropy-fiddling “demon” was laid to rest. Its slayer was the US physicist Charles Bennett, who built on work by his colleague at IBM, Rolf Landauer, using the theory of information developed a few decades earlier by Claude Shannon. An intelligent being can certainly rearrange things to lower the entropy of its environment. But to do this, it must first fill up its memory, gaining information as to how things are arranged in the first place.

This acquired information must be encoded somewhere, presumably in the demon’s memory. When this memory is finally full, or the being dies or otherwise expires, it must be reset. Dumping all this stored, ordered information back into the environment increases entropy – and this entropy increase, Bennett showed, will ultimately always be at least as large as the entropy reduction the demon originally achieved. Thus the status of the second law was assured, albeit anchored in a mantra of Landauer’s that would have been unintelligible to the 19th-century progenitors of thermodynamics: that “information is physical”.

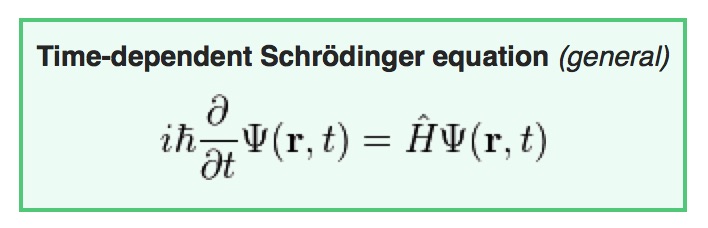

But how does this explain that thermodynamics survived the quantum revolution? Classical objects behave very differently to quantum ones, so the same is presumably true of classical and quantum information. After all, quantum computers are notoriously more powerful than classical ones (or would be if realised on a large scale).

The reason is subtle, and it lies in a connection between entropy and probability contained in perhaps the most profound and beautiful formula in all of science. Engraved on the tomb of the Austrian physicist Ludwig Boltzmann in Vienna’s central cemetery, it reads simply S = k log W. Here S is entropy – the macroscopic, measurable entropy of a gas, for example – while k is a constant of nature that today bears Boltzmann’s name. Log W is the mathematical logarithm of a microscopic, probabilistic quantity W – in a gas, this would be the number of ways the positions and velocities of its many individual atoms can be arranged.

On a philosophical level, Boltzmann’s formula embodies the spirit of reductionism: the idea that we can, at least in principle, reduce our outward knowledge of a system’s activities to basic, microscopic physical laws. On a practical, physical level, it tells us that all we need to understand disorder and its increase is probabilities. Tot up the number of configurations the atoms of a system can be in and work out their probabilities, and what emerges is nothing other than the entropy that determines its thermodynamical behaviour. The equation asks no further questions about the nature of the underlying laws; we need not care if the dynamical processes that create the probabilities are classical or quantum in origin.

There is an important additional point to be made here. Probabilities are fundamentally different things in classical and quantum physics. In classical physics they are “subjective” quantities that constantly change as our state of knowledge changes. The probability that a coin toss will result in heads or tails, for instance, jumps from ½ to 1 when we observe the outcome. If there were a being who knew all the positions and momenta of all the particles in the universe – known as a “Laplace demon”, after the French mathematician Pierre-Simon Laplace, who first countenanced the possibility – it would be able to determine the course of all subsequent events in a classical universe, and would have no need for probabilities to describe them.

In quantum physics, however, probabilities arise from a genuine uncertainty about how the world works. States of physical systems in quantum theory are represented in what the quantum pioneer Erwin Schrödinger called catalogues of information, but they are catalogues in which adding information on one page blurs or scrubs it out on another. Knowing the position of a particle more precisely means knowing less well how it is moving, for example. Quantum probabilities are “objective”, in the sense that they cannot be entirely removed by gaining more information.

That casts in an intriguing light thermodynamics as originally, classically formulated. There, the second law is little more than impotence written down in the form of an equation. It has no deep physical origin itself, but is an empirical bolt-on to express the otherwise unaccountable fact that we cannot know, predict or bring about everything that might happen, as classical dynamical laws suggest we can. But this changes as soon as you bring quantum physics into the picture, with its attendant notion that uncertainty is seemingly hardwired into the fabric of reality. Rooted in probabilities, entropy and thermodynamics acquire a new, more fundamental physical anchor.

It is worth pointing out, too, that this deep-rooted connection seems to be much more general. Recently, together with my colleagues Markus Müller of the Perimeter Institute for Theoretical Physics in Waterloo, Ontario, Canada, and Oscar Dahlsten at the Centre for Quantum Technologies in Singapore, I have looked at what happens to thermodynamical relations in a generalised class of probabilistic theories that embrace quantum theory and much more besides. There too, the crucial relationship between information and disorder, as quantified by entropy, survives (arxiv.org/1107.6029).

One theory to rule them all

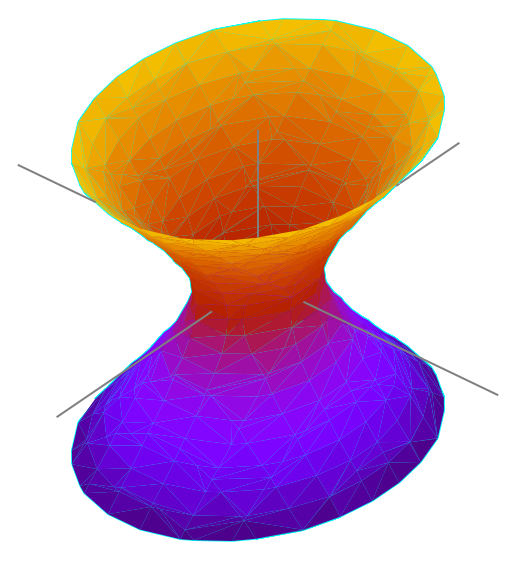

As for gravity – the only one of nature’s four fundamental forces not covered by quantum theory – a more speculative body of research suggests it might be little more than entropy in disguise (see “Falling into disorder”). If so, that would also bring Einstein’s general theory of relativity, with which we currently describe gravity, firmly within the purview of thermodynamics.

Take all this together, and we begin to have a hint of what makes thermodynamics so successful. The principles of thermodynamics are at their roots all to do with information theory. Information theory is simply an embodiment of how we interact with the universe – among other things, to construct theories to further our understanding of it. Thermodynamics is, in Einstein’s term, a “meta-theory”: one constructed from principles over and above the structure of any dynamical laws we devise to describe reality’s workings. In that sense we can argue that it is more fundamental than either quantum physics or general relativity.

If we can accept this and, like Eddington and his ilk, put all our trust in the laws of thermodynamics, I believe it may even afford us a glimpse beyond the current physical order. It seems unlikely that quantum physics and relativity represent the last revolutions in physics. New evidence could at any time foment their overthrow. Thermodynamics might help us discern what any usurping theory would look like.

For example, earlier this year, two of my colleagues in Singapore, Esther Hänggi and Stephanie Wehner, showed that a violation of the quantum uncertainty principle – that idea that you can never fully get rid of probabilities in a quantum context – would imply a violation of the second law of thermodynamics. Beating the uncertainty limit means extracting extra information about the system, which requires the system to do more work than thermodynamics allows it to do in the relevant state of disorder. So if thermodynamics is any guide, whatever any post-quantum world might look like, we are stuck with a degree of uncertainty (arxiv.org/abs/1205.6894).

My colleague at the University of Oxford, the physicist David Deutsch, thinks we should take things much further. Not only should any future physics conform to thermodynamics, but the whole of physics should be constructed in its image. The idea is to generalise the logic of the second law as it was stringently formulated by the mathematician Constantin Carathéodory in 1909: that in the vicinity of any state of a physical system, there are other states that cannot physically be reached if we forbid any exchange of heat with the environment.

James Joule’s 19th century experiments with beer can be used to illustrate this idea. The English brewer, whose name lives on in the standard unit of energy, sealed beer in a thermally isolated tub containing a paddle wheel that was connected to weights falling under gravity outside. The wheel’s rotation warmed the beer, increasing the disorder of its molecules and therefore its entropy. But hard as we might try, we simply cannot use Joule’s set-up to decrease the beer’s temperature, even by a fraction of a millikelvin. Cooler beer is, in this instance, a state regrettably beyond the reach of physics.

God, the thermodynamicist

The question is whether we can express the whole of physics simply by enumerating possible and impossible processes in a given situation. This is very different from how physics is usually phrased, in both the classical and quantum regimes, in terms of states of systems and equations that describe how those states change in time. The blind alleys down which the standard approach can lead are easiest to understand in classical physics, where the dynamical equations we derive allow a whole host of processes that patently do not occur – the ones we have to conjure up the laws of thermodynamics expressly to forbid, such as dye molecules reclumping spontaneously in water.

By reversing the logic, our observations of the natural world can again take the lead in deriving our theories. We observe the prohibitions that nature puts in place, be it on decreasing entropy, getting energy from nothing, travelling faster than light or whatever. The ultimately “correct” theory of physics – the logically tightest – is the one from which the smallest deviation gives us something that breaks those taboos.

There are other advantages in recasting physics in such terms. Time is a perennially problematic concept in physical theories. In quantum theory, for example, it enters as an extraneous parameter of unclear origin that cannot itself be quantised. In thermodynamics, meanwhile, the passage of time is entropy increase by any other name. A process such as dissolved dye molecules forming themselves into a clump offends our sensibilities because it appears to amount to running time backwards as much as anything else, although the real objection is that it decreases entropy.

Apply this logic more generally, and time ceases to exist as an independent, fundamental entity, but one whose flow is determined purely in terms of allowed and disallowed processes. With it go problems such as that I alluded to earlier, of why the universe started in a state of low entropy. If states and their dynamical evolution over time cease to be the question, then anything that does not break any transformational rules becomes a valid answer.

Such an approach would probably please Einstein, who once said: “What really interests me is whether God had any choice in the creation of the world.” A thermodynamically inspired formulation of physics might not answer that question directly, but leaves God with no choice but to be a thermodynamicist. That would be a singular accolade for those 19th-century masters of steam: that they stumbled upon the essence of the universe, entirely by accident. The triumph of thermodynamics would then be a revolution by stealth, 200 years in the making.

Read the entire article here.

Since they were first dreamed up explanations of the very small (quantum mechanics) and the very large (general relativity) have both been highly successful at describing their respective spheres of influence. Yet, these two descriptions of our physical universe are not compatible, particularly when it comes to describing gravity. Indeed, physicists and theorists have struggled for decades to unite these two frameworks. Many agree that we need a new theory (of everything).

Since they were first dreamed up explanations of the very small (quantum mechanics) and the very large (general relativity) have both been highly successful at describing their respective spheres of influence. Yet, these two descriptions of our physical universe are not compatible, particularly when it comes to describing gravity. Indeed, physicists and theorists have struggled for decades to unite these two frameworks. Many agree that we need a new theory (of everything).

Once in every while I have to delve into the esoteric world of

Once in every while I have to delve into the esoteric world of

Combine the vastness of the universe with the probabilistic behavior of quantum mechanics and you get some rather odd chemical results. This includes the spontaneous creation of some complex organic molecules in interstellar space — previously believed to be far too inhospitable for all but the lowliest forms of matter.

Combine the vastness of the universe with the probabilistic behavior of quantum mechanics and you get some rather odd chemical results. This includes the spontaneous creation of some complex organic molecules in interstellar space — previously believed to be far too inhospitable for all but the lowliest forms of matter. Some recent experiments out of the University of Toronto show for the first time an anomaly in measurements predicted by Werner Heisenberg’s fundamental law of quantum mechanics, the Uncertainty Principle.

Some recent experiments out of the University of Toronto show for the first time an anomaly in measurements predicted by Werner Heisenberg’s fundamental law of quantum mechanics, the Uncertainty Principle.

[div class=attrib]From Rationally Speaking:[end-div]

[div class=attrib]From Rationally Speaking:[end-div]