My pet octopus has moods. It can change the color of its skin on demand. It watches me with its huge eyes. It’s inquisitive and can manipulate objects. Importantly, my octopus has around half a billion neurons in its brain, compared with around 100 billion in mine, and around 50 million in your pet gerbil.

Ok, let me stop for a moment. I don’t actually have a pet octopus. But the rest is true — about the octopus’ remarkable abilities. So, does it have a mind and is it sentient?

From the Atlantic:

Drawing on the work of other researchers, from primatologists to fellow octopologists and philosophers, Godfrey-Smith suggests two reasons for the large nervous system of the octopus. One has to do with its body. For an animal like a cat or a human, details of the skeleton dictate many of the motions the animal can make. You can’t roll your arm into a neat spiral from wrist to shoulder— your bones and joints get in the way. An octopus, having no skeleton, has no such constraint. It can, and frequently does, roll up some of its arms; or it can choose to make one (or several) of them stiff, creating an elbow. Surely the animal needs a huge number of neurons merely to be well coordinated when roaming about the reef.

At the same time, octopuses are versatile predators, eating a wide variety of food, from lobsters and shrimps to clams and fish. Octopuses that live in tide pools will occasionally leap out of the water to catch passing crabs; some even prey on incautious birds, grabbing them by the legs, pulling them underwater, and drowning them. Animals that evolve to tackle diverse kinds of food may tend to evolve larger brains than animals that always handle food in the same way (think of a frog catching insects).

…

Like humans, octopuses learn new skills. In some species, individuals inhabit a den for only a week or so before moving on, so they are constantly learning routes through new environments. Similarly, the first time an octopus tackles a clam, say, it has to figure out how to open it—can it pull it apart, or would it be more effective to drill a hole? If consciousness is necessary for such tasks, then perhaps the octopus does have an awareness that in some ways resembles our own.

Perhaps, indeed, we should take the “mammalian” behaviors of octopuses at face value. If evolution can produce similar eyes through different routes, why not similar minds? Or perhaps, in wishing to find these animals like ourselves, what we are really revealing is our deep desire not to be alone.

Read the entire article here.

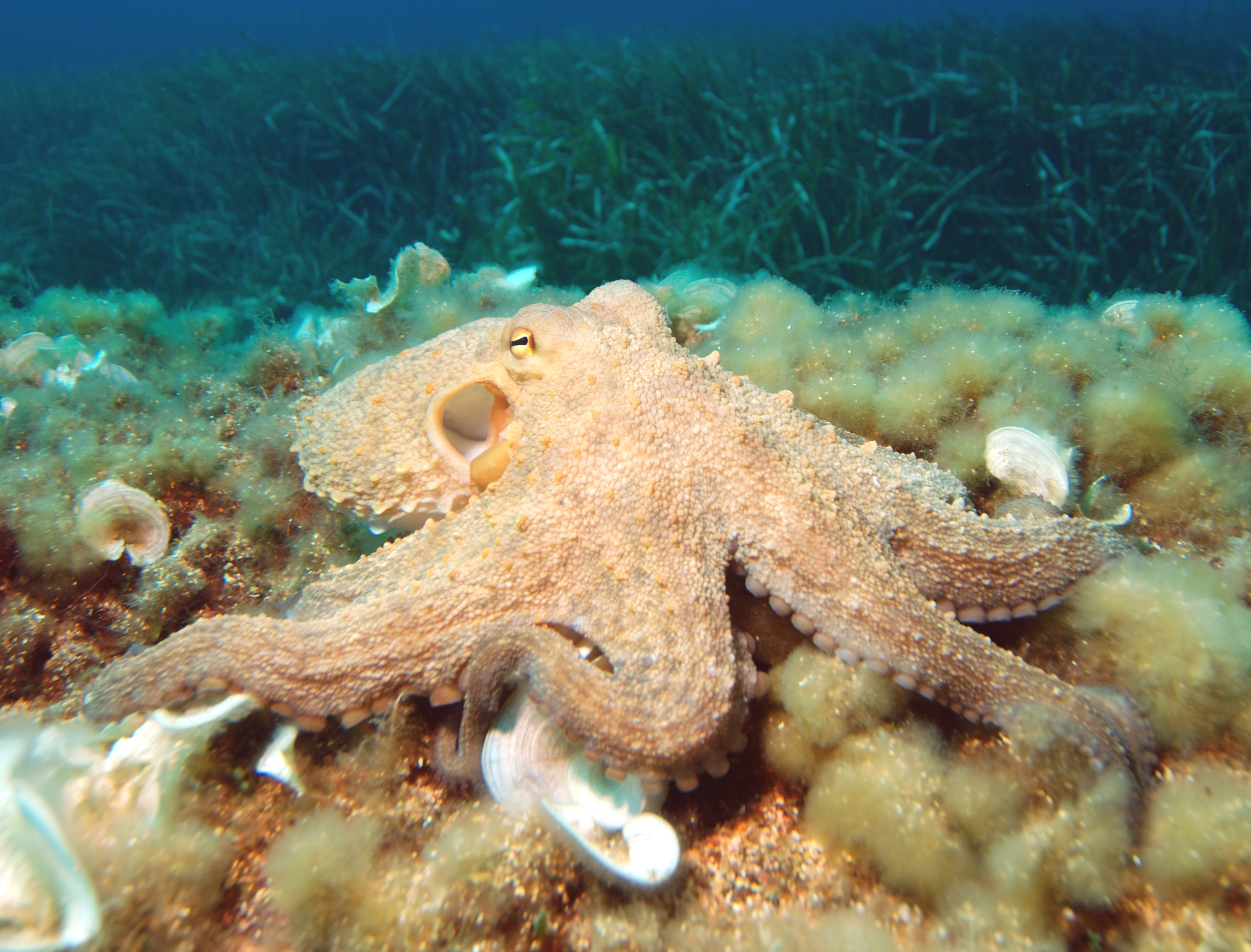

Image: Common octopus. Courtesy: Wikipedia. CC BY-SA 3.0.