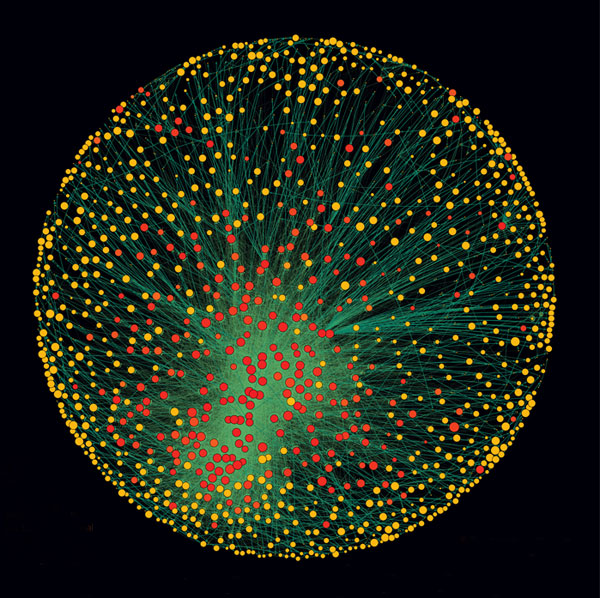

Six degrees of separation is commonly held urban myth that on average everyone on Earth is six connections or less away from any other person. That is, through a chain of friend of a friend (of a friend, etc) relationships you can find yourself linked to the President, the Chinese Premier, a farmer on the steppes of Mongolia, Nelson Mandela, the editor of theDiagonal, and any one of the other 7 billion people on the planet.

Six degrees of separation is commonly held urban myth that on average everyone on Earth is six connections or less away from any other person. That is, through a chain of friend of a friend (of a friend, etc) relationships you can find yourself linked to the President, the Chinese Premier, a farmer on the steppes of Mongolia, Nelson Mandela, the editor of theDiagonal, and any one of the other 7 billion people on the planet.

The recent notion of degrees of separation stems from original research by Michael Gurevich at Massachusetts Institute of Technology on the structure of social networks in his 1961. Subsequently, an Austrian mathematician, Manfred Kochen, proposed in his theory of connectedness for a U.S.-sized population, that “it is practically certain that any two individuals can contact one another by means of at least two intermediaries.” In 1967 psychologist Stanley Milgram and colleagues validated this through his acquaintanceship network experiments on what was then called the Small World Problem. In one example, with 296 volunteers who were asked to send a message by postcard, through friends and then friends of friends, to a specific person living near Boston. Milgram’s work published in Psychology Today showed that people in the United States seemed to be connected by approximately three friendship links, on average. The experiment generated a tremendous amount of publicity, and as a result to this day he is incorrectly attributed with originating the ideas and quantification of interconnectedness and even the statement “six degrees of separation”.

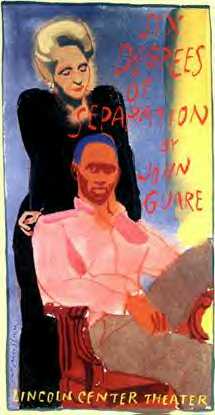

In fact, the statement was originally articulated in 1929 by Hungarian author, Frigyes Karinthy and later popularized by in a play written by John Guare. Karinthy believed that the modern world was ‘shrinking’ due to the accelerating interconnectedness of humans. He hypothesized that any two individuals could be connected through at most five acquaintances. In 1990, playwright John Guare unveiled a play (followed by a movie in 1993) titled “Six Degrees of Separation”. This popularized the notion and enshrined it into popular culture. In the play one of the characters reflects on the idea that any two individuals are connected by at most five others:

I read somewhere that everybody on this planet is separated by only six other people. Six degrees of separation between us and everyone else on this planet. The President of the United States, a gondolier in Venice, just fill in the names. I find it A) extremely comforting that we’re so close, and B) like Chinese water torture that we’re so close because you have to find the right six people to make the right connection… I am bound to everyone on this planet by a trail of six people.

Then in 1994 along came the Kevin Bacon trivia game, “Six Degrees of Kevin Bacon” invented as a play on the original concept. The goal of the game is to link any actor to Kevin Bacon through no more than six connections, where two actors are connected if they have appeared in a movie or commercial together.

Now, in 2011 comes a study of connectedness of Facebook users. Using Facebook’s population of over 700 million users, researchers found that the average number of links from any arbitrarily selected user to another was 4.74; for Facebook users in the U.S., the average number of of links was just 4.37. Facebook posted detailed findings on its site, here.

So, the Small World Problem popularized by Milgram and colleagues is actually becoming smaller as Frigyes Karinthy had originally suggested back in 1929. As a result, you may not be as “far” from the Chinese Premier or Nelson Mandela as you may have previously believed.

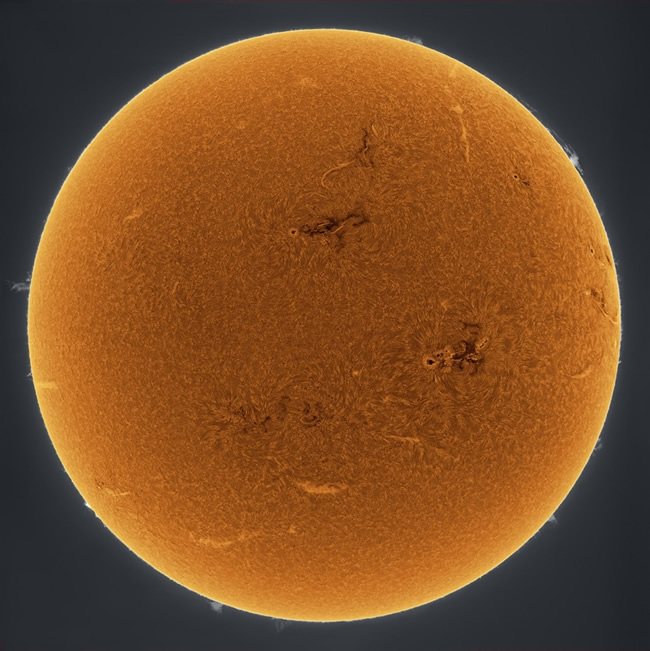

[div class=attrib]Image: Six Degrees of Separation Poster by James McMullan. Courtesy of Wikipedia.[end-div]

Dante Alighieri is held in high regard in Italy, where he is often referred to as il Poeta, the poet. He is best known for the monumental poem La Commedia, later renamed La Divina Commedia – The Divine Comedy. Scholars consider it to be the greatest work of literature in the Italian language. Many also consider Dante to be symbolic father of the Italian language.

Dante Alighieri is held in high regard in Italy, where he is often referred to as il Poeta, the poet. He is best known for the monumental poem La Commedia, later renamed La Divina Commedia – The Divine Comedy. Scholars consider it to be the greatest work of literature in the Italian language. Many also consider Dante to be symbolic father of the Italian language. The ubiquity of point-and-click digital cameras and camera-equipped smartphones seems to be leading us towards an era where it is more common to snap and share a picture of the present via a camera lens than it is to experience the present individually and through one’s own eyes.

The ubiquity of point-and-click digital cameras and camera-equipped smartphones seems to be leading us towards an era where it is more common to snap and share a picture of the present via a camera lens than it is to experience the present individually and through one’s own eyes.

The hippies of the sixties wanted love; the beatniks sought transcendence. Then came the punks, who were all about rage. The slackers and generation X stood for apathy and worry. And, now coming of age we have generation Y, also known as the “millennials”, whose birthdays fall roughly between 1982-2000.

The hippies of the sixties wanted love; the beatniks sought transcendence. Then came the punks, who were all about rage. The slackers and generation X stood for apathy and worry. And, now coming of age we have generation Y, also known as the “millennials”, whose birthdays fall roughly between 1982-2000. Daniel Kahneman brings together for the first time his decades of groundbreaking research and profound thinking in social psychology and cognitive science in his new book, Thinking Fast and Slow. He presents his current understanding of judgment and decision making and offers insight into how we make choices in our daily lives. Importantly, Kahneman describes how we can identify and overcome the cognitive biases that frequently lead us astray. This is an important work by one of our leading thinkers.

Daniel Kahneman brings together for the first time his decades of groundbreaking research and profound thinking in social psychology and cognitive science in his new book, Thinking Fast and Slow. He presents his current understanding of judgment and decision making and offers insight into how we make choices in our daily lives. Importantly, Kahneman describes how we can identify and overcome the cognitive biases that frequently lead us astray. This is an important work by one of our leading thinkers. A chronicler of the human condition and deeply personal emotion, poet Sharon Olds is no shrinking violet. Her contemporary poems have been both highly praised and condemned for their explicit frankness and intimacy.

A chronicler of the human condition and deeply personal emotion, poet Sharon Olds is no shrinking violet. Her contemporary poems have been both highly praised and condemned for their explicit frankness and intimacy. Recollect the piped “musak” that once played, and still plays, in many hotel elevators and public waiting rooms. Remember the perfectly designed mood music in restaurants and museums. Now, re-imagine the ambient soundscape dynamically customized for a space based on the music preferences of the people inhabiting that space. Well, there is a growing list of apps for that.

Recollect the piped “musak” that once played, and still plays, in many hotel elevators and public waiting rooms. Remember the perfectly designed mood music in restaurants and museums. Now, re-imagine the ambient soundscape dynamically customized for a space based on the music preferences of the people inhabiting that space. Well, there is a growing list of apps for that. The United States spends around $2.5 trillion per year on health care. Approximately 14 percent of this is administrative spending. That’s $360 billion, yes, billion with a ‘b’, annually. And, by all accounts a significant proportion of this huge sum is duplicate, redundant, wasteful and unnecessary spending — that’s a lot of paperwork.

The United States spends around $2.5 trillion per year on health care. Approximately 14 percent of this is administrative spending. That’s $360 billion, yes, billion with a ‘b’, annually. And, by all accounts a significant proportion of this huge sum is duplicate, redundant, wasteful and unnecessary spending — that’s a lot of paperwork. The unfolding financial crises and political upheavals in Europe have taken several casualties. Notably, the fall of both leaders and their governments in Greece and Italy. Both have been replaced by so-called “technocrats”. So, what is a technocrat and why? State explains.

The unfolding financial crises and political upheavals in Europe have taken several casualties. Notably, the fall of both leaders and their governments in Greece and Italy. Both have been replaced by so-called “technocrats”. So, what is a technocrat and why? State explains. Long before the first galaxy clusters and the first galaxies appeared in our universe, and before the first stars, came the first basic elements — hydrogen, helium and lithium.

Long before the first galaxy clusters and the first galaxies appeared in our universe, and before the first stars, came the first basic elements — hydrogen, helium and lithium.

In early 2010 a Japanese research team grew retina-like structures from a culture of mouse embryonic stem cells. Now, only a year later, the same team at the RIKEN Center for Developmental Biology announced their success in growing a much more complex structure following a similar process — a mouse pituitary gland. This is seen as another major step towards bioengineering replacement organs for human transplantation.

In early 2010 a Japanese research team grew retina-like structures from a culture of mouse embryonic stem cells. Now, only a year later, the same team at the RIKEN Center for Developmental Biology announced their success in growing a much more complex structure following a similar process — a mouse pituitary gland. This is seen as another major step towards bioengineering replacement organs for human transplantation. [div class=attrib]From Wired:[end-div]

[div class=attrib]From Wired:[end-div] Poet, essayist and playwright Todd Hearon grew up in North Carolina. He earned a PhD in editorial studies from Boston University. He is winner of a number of national poetry and playwriting awards including the 2007 Friends of Literature Prize and a Dobie Paisano Fellowship from the University of Texas at Austin.

Poet, essayist and playwright Todd Hearon grew up in North Carolina. He earned a PhD in editorial studies from Boston University. He is winner of a number of national poetry and playwriting awards including the 2007 Friends of Literature Prize and a Dobie Paisano Fellowship from the University of Texas at Austin.

Joy Harjo is an acclaimed poet, musician and noted teacher. Her poetry is grounded in the United States’ Southwest and often encompasses Native American stories and values.

Joy Harjo is an acclaimed poet, musician and noted teacher. Her poetry is grounded in the United States’ Southwest and often encompasses Native American stories and values. We promise. There is no screeching embedded audio of someone slowly dragging a piece of chalk, or worse, fingernails, across a blackboard! Though, even the thought of this sound causes many to shudder. Why? A plausible explanation over at Wired UK.

We promise. There is no screeching embedded audio of someone slowly dragging a piece of chalk, or worse, fingernails, across a blackboard! Though, even the thought of this sound causes many to shudder. Why? A plausible explanation over at Wired UK. The lowly incandescent light bulb continues to come under increasing threat. First, came the fluorescent tube, then the compact fluorescent. More recently the LED (light emitting diode) seems to be gaining ground. Now LED technology takes another leap forward with printed LED “light sheets”.

The lowly incandescent light bulb continues to come under increasing threat. First, came the fluorescent tube, then the compact fluorescent. More recently the LED (light emitting diode) seems to be gaining ground. Now LED technology takes another leap forward with printed LED “light sheets”.