Ask a hundred people how science can be used for the good and you’re likely to get a hundred different answers. Well, Edge Magazine did just that, posing the question: “What scientific concept would improve everybody’s cognitive toolkit”, to 159 critical thinkers. Below we excerpt some of our favorites. The thoroughly engrossing, novel length article can be found here in its entirety.

[div class=attrib]From Edge:[end-div]

ether

Richard H. Thaler. Father of behavioral economics.

I recently posted a question in this space asking people to name their favorite example of a wrong scientific belief. One of my favorite answers came from Clay Shirky. Here is an excerpt:

The existence of ether, the medium through which light (was thought to) travel. It was believed to be true by analogy — waves propagate through water, and sound waves propagate through air, so light must propagate through X, and the name of this particular X was ether.

It’s also my favorite because it illustrates how hard it is to accumulate evidence for deciding something doesn’t exist. Ether was both required by 19th century theories and undetectable by 19th century apparatus, so it accumulated a raft of negative characteristics: it was odorless, colorless, inert, and so on.

Ecology

Brian Eno. Artist; Composer; Recording Producer: U2, Cold Play, Talking Heads, Paul Simon.

That idea, or bundle of ideas, seems to me the most important revolution in general thinking in the last 150 years. It has given us a whole new sense of who we are, where we fit, and how things work. It has made commonplace and intuitive a type of perception that used to be the province of mystics — the sense of wholeness and interconnectedness.

Beginning with Copernicus, our picture of a semi-divine humankind perfectly located at the centre of The Universe began to falter: we discovered that we live on a small planet circling a medium sized star at the edge of an average galaxy. And then, following Darwin, we stopped being able to locate ourselves at the centre of life. Darwin gave us a matrix upon which we could locate life in all its forms: and the shocking news was that we weren’t at the centre of that either — just another species in the innumerable panoply of species, inseparably woven into the whole fabric (and not an indispensable part of it either). We have been cut down to size, but at the same time we have discovered ourselves to be part of the most unimaginably vast and beautiful drama called Life.

We Are Not Alone In The Universe

J. Craig Venter. Leading scientist of the 21st century.

I cannot imagine any single discovery that would have more impact on humanity than the discovery of life outside of our solar system. There is a human-centric, Earth-centric view of life that permeates most cultural and societal thinking. Finding that there are multiple, perhaps millions of origins of life and that life is ubiquitous throughout the universe will profoundly affect every human.

Correlation is not a cause

Susan Blackmore. Psychologist; Author, Consciousness: An Introduction.

The phrase “correlation is not a cause” (CINAC) may be familiar to every scientist but has not found its way into everyday language, even though critical thinking and scientific understanding would improve if more people had this simple reminder in their mental toolkit.

One reason for this lack is that CINAC can be surprisingly difficult to grasp. I learned just how difficult when teaching experimental design to nurses, physiotherapists and other assorted groups. They usually understood my favourite example: imagine you are watching at a railway station. More and more people arrive until the platform is crowded, and then — hey presto — along comes a train. Did the people cause the train to arrive (A causes B)? Did the train cause the people to arrive (B causes A)? No, they both depended on a railway timetable (C caused both A and B).

A Statistically Significant Difference in Understanding the Scientific Process

Diane F. Halpern. Professor, Claremont McKenna College; Past-president, American Psychological Society.

Statistically significant difference — It is a simple phrase that is essential to science and that has become common parlance among educated adults. These three words convey a basic understanding of the scientific process, random events, and the laws of probability. The term appears almost everywhere that research is discussed — in newspaper articles, advertisements for “miracle” diets, research publications, and student laboratory reports, to name just a few of the many diverse contexts where the term is used. It is a short hand abstraction for a sequence of events that includes an experiment (or other research design), the specification of a null and alternative hypothesis, (numerical) data collection, statistical analysis, and the probability of an unlikely outcome. That is a lot of science conveyed in a few words.

Confabulation

Fiery Cushman. Post-doctoral fellow, Mind/Brain/Behavior Interfaculty Initiative, Harvard University.

We are shockingly ignorant of the causes of our own behavior. The explanations that we provide are sometimes wholly fabricated, and certainly never complete. Yet, that is not how it feels. Instead it feels like we know exactly what we’re doing and why. This is confabulation: Guessing at plausible explanations for our behavior, and then regarding those guesses as introspective certainties. Every year psychologists use dramatic examples to entertain their undergraduate audiences. Confabulation is funny, but there is a serious side, too. Understanding it can help us act better and think better in everyday life.

We are Lost in Thought

Sam Harris. Neuroscientist; Chairman, The Reason Project; Author, Letter to a Christian Nation.

I invite you to pay attention to anything — the sight of this text, the sensation of breathing, the feeling of your body resting against your chair — for a mere sixty seconds without getting distracted by discursive thought. It sounds simple enough: Just pay attention. The truth, however, is that you will find the task impossible. If the lives of your children depended on it, you could not focus on anything — even the feeling of a knife at your throat — for more than a few seconds, before your awareness would be submerged again by the flow of thought. This forced plunge into unreality is a problem. In fact, it is the problem from which every other problem in human life appears to be made.

I am by no means denying the importance of thinking. Linguistic thought is indispensable to us. It is the basis for planning, explicit learning, moral reasoning, and many other capacities that make us human. Thinking is the substance of every social relationship and cultural institution we have. It is also the foundation of science. But our habitual identification with the flow of thought — that is, our failure to recognize thoughts as thoughts, as transient appearances in consciousness — is a primary source of human suffering and confusion.

Knowledge

Mark Pagel. Professor of Evolutionary Biology, Reading University, England and The Santa Fe.

The Oracle of Delphi famously pronounced Socrates to be “the most intelligent man in the world because he knew that he knew nothing”. Over 2000 years later the physicist-turned-historian Jacob Bronowski would emphasize — in the last episode of his landmark 1970s television series the “Ascent of Man” — the danger of our all-too-human conceit of thinking we know something. What Socrates knew and what Bronowski had come to appreciate is that knowledge — true knowledge — is difficult, maybe even impossible, to come buy, it is prone to misunderstanding and counterfactuals, and most importantly it can never be acquired with exact precision, there will always be some element of doubt about anything we come to “know”‘ from our observations of the world.

[div class=attrib]More from theSource here.[end-div]

Neuroscientists continue to find interesting experimental evidence that we do not have free will. Many philosophers continue to dispute this notion and cite inconclusive results and lack of holistic understanding of decision-making on the part of brain scientists. An article by Kerri Smith over at Nature lays open this contentious and fascinating debate.

Neuroscientists continue to find interesting experimental evidence that we do not have free will. Many philosophers continue to dispute this notion and cite inconclusive results and lack of holistic understanding of decision-making on the part of brain scientists. An article by Kerri Smith over at Nature lays open this contentious and fascinating debate.

“You cannot be serious”, goes the oft quoted opening to a John McEnroe javelin thrown at an unsuspecting tennis umpire. This leads us to an earnest review of what is means to be serious from Lee Siegel’s new book, “Are You Serious?” As Michael Agger points out for Slate:

“You cannot be serious”, goes the oft quoted opening to a John McEnroe javelin thrown at an unsuspecting tennis umpire. This leads us to an earnest review of what is means to be serious from Lee Siegel’s new book, “Are You Serious?” As Michael Agger points out for Slate: Twenty or so years ago the economic prognosticators and technology pundits would all have had us believe that the internet would transform society; it would level the playing field; it would help the little guy compete against the corporate behemoth; it would make us all “socially” rich if not financially. Yet, the promise of those early, heady days seems remarkably narrow nowadays. What happened? Or rather, what didn’t happen?

Twenty or so years ago the economic prognosticators and technology pundits would all have had us believe that the internet would transform society; it would level the playing field; it would help the little guy compete against the corporate behemoth; it would make us all “socially” rich if not financially. Yet, the promise of those early, heady days seems remarkably narrow nowadays. What happened? Or rather, what didn’t happen?

As any Italian speaker would attest, the moon, of course is utterly feminine. It is “la luna”. Now, to a German it is “der mond”, and very masculine.

As any Italian speaker would attest, the moon, of course is utterly feminine. It is “la luna”. Now, to a German it is “der mond”, and very masculine.

This week theDiagonal triangulates its sights on the topic of language and communication. So, we introduce an apt poem by Robert Duncan. Of Robert Duncan, Poetry Foundation writes:

This week theDiagonal triangulates its sights on the topic of language and communication. So, we introduce an apt poem by Robert Duncan. Of Robert Duncan, Poetry Foundation writes: [div class=attrib]From Neuroskeptic:[end-div]

[div class=attrib]From Neuroskeptic:[end-div]

[div class=attrib]From Slate:[end-div]

[div class=attrib]From Slate:[end-div] [div class=attrib]From Project Syndicate:[end-div]

[div class=attrib]From Project Syndicate:[end-div]

Frequent fliers the world over may soon find themselves thanking a physicist named Jason Steffen. Back in 2008 he ran some computer simulations to find a more efficient way for travelers to board an airplane. Recent tests inside a mock cabin interior confirmed Steffen’s model to be both faster for the airline and easier for passengers, and best of all less time spent waiting in the aisle and jostling for overhead bin space.

Frequent fliers the world over may soon find themselves thanking a physicist named Jason Steffen. Back in 2008 he ran some computer simulations to find a more efficient way for travelers to board an airplane. Recent tests inside a mock cabin interior confirmed Steffen’s model to be both faster for the airline and easier for passengers, and best of all less time spent waiting in the aisle and jostling for overhead bin space. Labor Day traditionally signals the end of summer. A poem by Gwendolyn Brooks sets the mood. She was the first black author to win the Pulitzer Prize.

Labor Day traditionally signals the end of summer. A poem by Gwendolyn Brooks sets the mood. She was the first black author to win the Pulitzer Prize. Cities are one of the most remarkable and peculiar inventions of our species. They provide billions in the human family a framework for food, shelter and security. Increasingly, cities are becoming hubs in a vast data network where public officials and citizens mine and leverage vast amounts of information.

Cities are one of the most remarkable and peculiar inventions of our species. They provide billions in the human family a framework for food, shelter and security. Increasingly, cities are becoming hubs in a vast data network where public officials and citizens mine and leverage vast amounts of information. [div class=attrib]Thomas Rogers for Slate:[end-div]

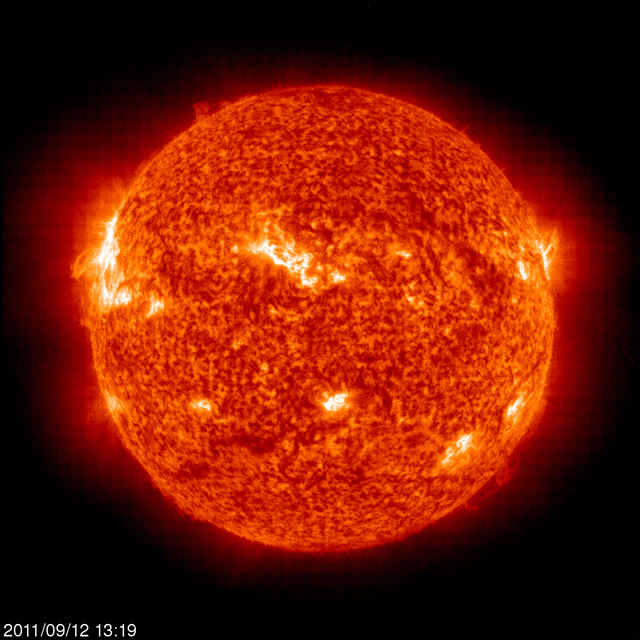

[div class=attrib]Thomas Rogers for Slate:[end-div] Cosmologists and particle physicists have over the last decade or so proposed the existence of Dark Matter. It’s so called because it cannot be seen or sensed directly. It is inferred from gravitational effects on visible matter. Together with it’s theoretical cousin, Dark Energy, the two were hypothesized to make up most of the universe. In fact, the regular star-stuff — matter and energy — of which we, our planet, solar system and the visible universe are made, consists of only a paltry 4 percent.

Cosmologists and particle physicists have over the last decade or so proposed the existence of Dark Matter. It’s so called because it cannot be seen or sensed directly. It is inferred from gravitational effects on visible matter. Together with it’s theoretical cousin, Dark Energy, the two were hypothesized to make up most of the universe. In fact, the regular star-stuff — matter and energy — of which we, our planet, solar system and the visible universe are made, consists of only a paltry 4 percent. [div class=attrib]From Scientific American:[end-div]

[div class=attrib]From Scientific American:[end-div] [div class=attrib]From Slate:[end-div]

[div class=attrib]From Slate:[end-div] [div class=attrib]From Frank Jacobs for Strange Maps:[end-div]

[div class=attrib]From Frank Jacobs for Strange Maps:[end-div]

Why do some words take hold in the public consciousness and persist through generations while others fall by the wayside after one season?

Why do some words take hold in the public consciousness and persist through generations while others fall by the wayside after one season? A poem by Billy Collins ushers in another week. Collins served two terms as the U.S. Poet Laureate, from 2001-2003. He is known for poetry imbued with leftfield humor and deep insight.

A poem by Billy Collins ushers in another week. Collins served two terms as the U.S. Poet Laureate, from 2001-2003. He is known for poetry imbued with leftfield humor and deep insight. Jonathan Ive, the design brains behind such iconic contraptions as the iMac, iPod and the iPhone discusses his notion of “undesign”. Ive has over 300 patents and is often cited as one of the most influential industrial designers of the last 20 years. Perhaps it’s purely coincidental that’s Ive’s understated “undesign” comes from his unassuming Britishness.

Jonathan Ive, the design brains behind such iconic contraptions as the iMac, iPod and the iPhone discusses his notion of “undesign”. Ive has over 300 patents and is often cited as one of the most influential industrial designers of the last 20 years. Perhaps it’s purely coincidental that’s Ive’s understated “undesign” comes from his unassuming Britishness.